Interacting with Red Hat Developer Lightspeed for Red Hat Developer Hub

Leverage Artificial Intelligence (AI)-driven expertise of the Red Hat Developer Lightspeed for Red Hat Developer Hub (Developer Lightspeed for RHDH) virtual assistant to help you use Red Hat Developer Hub (RHDH)

Abstract

- 1. About Developer Lightspeed for RHDH

- 2. Supported architecture for Red Hat Developer Lightspeed for Red Hat Developer Hub

- 3. Retrieval augmented generation (RAG) embeddings

- 4. Install and configure Red Hat Developer Lightspeed for Red Hat Developer Hub

- 5. Customize Developer Lightspeed for RHDH

- 6. Solve project-specific challenges with Developer Lightspeed for RHDH Notebooks

- 7. Get AI-assisted help for your development tasks

- 8. AI model evaluation data to select the right AI model

- 8.1. Configure the evaluation environment to validate model accuracy

- 8.2. Prepare evaluation datasets to verify AI-generated responses

- 8.3. Run performance tests to ensure AI response reliability

- 8.4. Analyze evaluation results to identify performance gaps

- 8.5. Evaluation metrics and historical data reference

- 8.6. Release report and historical data

- 9. Appendix: LLM requirements

- 10. Appendix About user data security

Red Hat Developer Lightspeed for Red Hat Developer Hub (Developer Lightspeed for RHDH) is an AI-powered virtual assistant for Red Hat Developer Hub (RHDH). You can interact with Developer Lightspeed for RHDH to explore RHDH capabilities in detail.

1. About Developer Lightspeed for RHDH

This early access program enables customers to share feedback on the user experience, features, capabilities, and any issues encountered. Your input helps ensure that Developer Lightspeed for RHDH better meets your needs when it is officially released and made generally available.

This section describes Developer Preview features in the Red Hat Developer Lightspeed for Red Hat Developer Hub plugin. Developer Preview features are not supported by Red Hat in any way and are not functionally complete or production-ready. Do not use Developer Preview features for production or business-critical workloads. Developer Preview features provide early access to functionality in advance of possible inclusion in a Red Hat product offering. Customers can use these features to test functionality and provide feedback during the development process. Developer Preview features might not have any documentation, are subject to change or removal at any time, and have received limited testing. Red Hat might provide ways to submit feedback on Developer Preview features without an associated SLA.

For more information about the support scope of Red Hat Developer Preview features, see Developer Preview Support Scope.

Developer Lightspeed for RHDH provides a natural language interface within the RHDH console, helping you easily find information about the product, understand its features, and get answers to your questions.

You can experience Developer Lightspeed for RHDH Developer Preview by installing the Developer Lightspeed for RHDH plugin within an existing RHDH instance. Alternatively, if you prefer to test it locally first, you can try Developer Lightspeed for RHDH using RHDH Local.

Developer Lightspeed for RHDH uses a FAB instead of a sidebar navigation item. If your environment uses another global FAB, you must move the existing button or disable it to prevent interface elements from overlapping. In your dynamic plugin configuration file, make the following update:

- package: ./dynamic-plugins/dist/red-hat-developer-hub-backstage-plugin-bulk-import

disabled: true

pluginConfig:

dynamicPlugins:

frontend:

red-hat-developer-hub.backstage-plugin-bulk-import:

mountPoints:

- mountPoint: global.floatingactionbutton/config

importName: BulkImportPage # Example

config:

slot: 'bottom-left'

icon: BulkImportIcon

label: 'Bulk import'

toolTip: 'Register multiple repositories in bulk'

to: /bulk-import

translationResources:

- importName: bulkImportTranslations

module: Alpha

ref: bulkImportTranslationRef

appIcons:

- name: bulkImportIcon

importName: BulkImportIcon

dynamicRoutes:

- path: /bulk-import

importName: BulkImportPage

menuItem:

icon: bulkImportIcon

text: Bulk import

textKey: menuItem.bulkImport

Additional resources

2. Supported architecture for Red Hat Developer Lightspeed for Red Hat Developer Hub

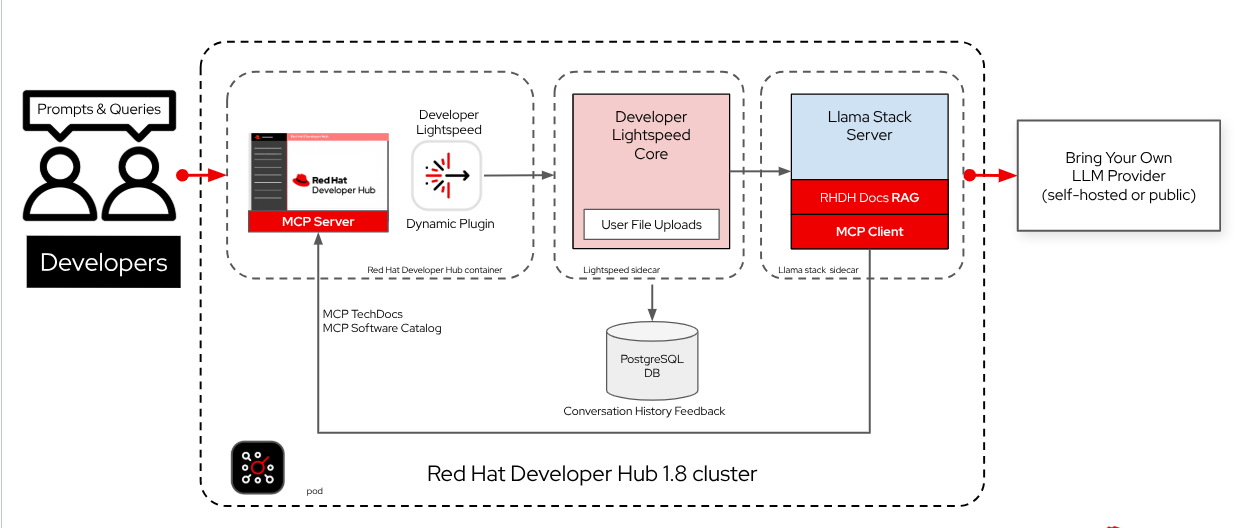

Developer Lightspeed for RHDH is available as a plugin on all platforms that host RHDH. It requires two sidecar containers: the Lightspeed Core Service (LCS) and the Llama Stack service.

The LCS container acts as the intermediary layer, which interfaces with and manages the Llama Stack service.

Additional resources

2.1. About Lightspeed Core Service and Llama Stack

The Lightspeed Core Service and Llama Stack deploy together as sidecar containers to augment RHDH functionality.

This section describes Developer Preview features in the Red Hat Developer Lightspeed for Red Hat Developer Hub plugin. Developer Preview features are not supported by Red Hat in any way and are not functionally complete or production-ready. Do not use Developer Preview features for production or business-critical workloads. Developer Preview features provide early access to functionality in advance of possible inclusion in a Red Hat product offering. Customers can use these features to test functionality and provide feedback during the development process. Developer Preview features might not have any documentation, are subject to change or removal at any time, and have received limited testing. Red Hat might provide ways to submit feedback on Developer Preview features without an associated SLA.

For more information about the support scope of Red Hat Developer Preview features, see Developer Preview Support Scope.

The Llama Stack delivers the augmented functionality by integrating and managing core components, which include:

- Large language model (LLM) inference providers

Model Context Protocol (MCP) or Retrieval Augmented Generation (RAG) tool runtime providers

ImportantYou must verify that your model supports tool calling before you enable Model Context Protocol (MCP) features. Using an incompatible model results in error messages.

- Safety providers

- Vector database settings

The Lightspeed Core Service serves as the Llama Stack service intermediary. It manages the operational configuration and key data, specifically:

- User feedback collection

- MCP server configuration

- Conversation history

Llama Stack provides the inference functionality that LCS uses to process requests.

The Red Hat Developer Lightspeed for Red Hat Developer Hub plugin in RHDH sends prompts and receives LLM responses through the LCS sidecar. LCS then uses the Llama Stack sidecar service to perform inference and MCP or RAG tool calling.

Red Hat Developer Lightspeed for Red Hat Developer Hub is a Developer Preview release. You must manually deploy the Lightspeed Core Service and Llama Stack sidecar containers, and install the Red Hat Developer Lightspeed for Red Hat Developer Hub plugin on your RHDH instance.

Additional resources

3. Retrieval augmented generation (RAG) embeddings

The Red Hat Developer Hub documentation serves as the Retrieval-Augmented Generation (RAG) data source.

RAG initialization occurs through an initialization container, which copies the RAG data to a shared volume. The Llama Stack sidecar then mounts this shared volume to access the RAG data. The Llama Stack service uses the resulting RAG embeddings in the vector database as a reference. This allows the service to provide citations to production documentation during the inference process.

4. Install and configure Red Hat Developer Lightspeed for Red Hat Developer Hub

Developer Lightspeed for RHDH includes several components that work together to provide virtual assistant (chat) functionality: Llama Stack server, Lightspeed Core Service (LCS), and Red Hat Developer Lightspeed for Red Hat Developer Hub dynamic plugins. To provide Developer Lightspeed for RHDH to your users, you must configure these components to communicate with each other.

This section describes Developer Preview features in the Red Hat Developer Lightspeed for Red Hat Developer Hub plugin. Developer Preview features are not supported by Red Hat in any way and are not functionally complete or production-ready. Do not use Developer Preview features for production or business-critical workloads. Developer Preview features provide early access to functionality in advance of possible inclusion in a Red Hat product offering. Customers can use these features to test functionality and provide feedback during the development process. Developer Preview features might not have any documentation, are subject to change or removal at any time, and have received limited testing. Red Hat might provide ways to submit feedback on Developer Preview features without an associated SLA.

For more information about the support scope of Red Hat Developer Preview features, see Developer Preview Support Scope.

- Llama stack server (container sidecar)

- Based on open source Llama Stack, this service is the gateway to your LLM inferencing provider for chat services. Its modular architecture supports integrating other services, such as Model Context Protocol (MCP). To enable chat functionality, you must integrate your LLM provider with the Llama Stack server using Bring Your Own Model or BYOM.

- Lightspeed Core Service (LCS) (container sidecar)

- Based on the open source Lightspeed Core, this service extends the Llama Stack server by maintaining chat history and gathering user feedback.

- Red Hat Developer Lightspeed for Red Hat Developer Hub (dynamic plugins)

- These plugins enable the Developer Lightspeed for RHDH user interface within your RHDH instance.

- If you are upgrading from the previous Developer Lightspeed for RHDH Developer Preview with Road-Core Service, you must remove all existing Developer Lightspeed for RHDH configurations before reinstalling.

- To prevent or resolve upgrade inconsistencies, drop and recreate the dynamic plugins volume.

This reinstallation is required due to the following fundamental architectural changes:

- The previous release used Road-Core Service as a sidecar container for interfacing with LLM providers.

- The updated architecture replaces {rcs-short} with the new Lightspeed Core Service and Llama Stack server, which requires new configurations for the plugins, volumes, containers, and secrets.

Prerequisites

- You are logged in to your OpenShift Container Platform account.

- You have an RHDH instance installed using either the Operator or the Helm chart.

- You have created a custom dynamic plugins ConfigMap.

- You have administrative access to the RHDH configuration files.

Procedure

Create the Lightspeed Core Service (LCS) ConfigMap to store the service configuration:

- In the OpenShift Container Platform web console, navigate to your RHDH instance and select the ConfigMaps tab.

Click Create ConfigMaps, select YAML view, and edit the file using the following structure. This example demonstrates the configuration for the LCS ConfigMap, typically named

lightspeed-stack, which connects to the Llama Stack service locally on port8321:kind: ConfigMap apiVersion: v1 metadata: name: lightspeed-stack data: lightspeed-stack.yaml: | name: Lightspeed Core Service (LCS) service: host: 0.0.0.0 # If the service does not run on port 8080, you must use ${env.LIGHTSPEED_SERVICE_PORT}. This value must match the port defined in lightspeed-app-config.yaml # port: ${env.LIGHTSPEED_SERVICE_PORT} auth_enabled: false workers: 1 color_log: true access_log: true llama_stack: use_as_library_client: false url: http://localhost:8321 user_data_collection: feedback_enabled: true feedback_storage: "/tmp/data/feedback" transcripts_enabled: true transcripts_storage: "/tmp/data/transcripts" authentication: module: "noop" conversation_cache: type: "sqlite" sqlite: db_path: "/tmp/data/conversations/lcs_cache.db"ImportantLCS requires Llama Stack expansion syntax for environment variables in the

lightspeed-stack.yamlfile. You must use the${env.VAR}format with uppercase variable names. The service does not support lowercase names or omitting theenv.prefix, such as${env.var}.- Click Create.

Create the Developer Lightspeed for RHDH ConfigMap (

lightspeed-app-config) for plugin configurations:- In the OpenShift Container Platform web console, navigate to your RHDH instance and select the ConfigMaps tab.

Click Create ConfigMap, select YAML view, and add the following configuration:

kind: ConfigMap apiVersion: v1 metadata: name: lightspeed-app-config namespace: <__namespace__> # Enter your RHDH instance namespace data: app-config.yaml: |- backend: csp: upgrade-insecure-requests: false img-src: - "'self'" - "data:" - https://img.freepik.com - https://cdn.dribbble.com - https://avatars.githubusercontent.com # To load GitHub avatars - https://secure.gravatar.com # To load Gravatar icons - https://<your_gitlab_url> # To load GitLab icons script-src: - "'self'" - "'unsafe-eval'" - https://cdn.jsdelivr.net lightspeed: # OPTIONAL: Custom users prompts displayed to users # If not provided, the plugin uses built-in default prompts prompts: - title: Getting Started with Red Hat Developer Hub message: Can you guide me through the first steps to start using RHDH as a developer, like exploring the Software Catalog and adding my service? # OPTIONAL: Port for lightspeed service (default: 8080) # servicePort: ${LIGHTSPEED_SERVICE_PORT} # OPTIONAL: Override default RHDH system prompt # systemPrompt: "You are a helpful assistant focused on Red Hat Developer Hub development."NoteTo display user icons from external providers such as Gravatar or GitLab, include the provider URLs in the

img-srcdirective of your Content Security Policy (CSP).- Click Create.

Create Llama Stack Secret file (

llama-stack-secrets) for LLM provider credentials:Important- Red Hat Developer Hub 1.9 supports vLLM, native OpenAI, Vertex AI, and Ollama providers. The vLLM provider uses the OpenAI API schema but it does not support the official OpenAI service (api.openai.com). To use official OpenAI credentials, you must use the native OpenAI provider instead of vLLM. Using OpenAI credentials with the vLLM provider causes errors or improper responses.

- In the OpenShift Container Platform web console, navigate to Secrets.

Click Create → Key/value secret, select YAML view, and add the following structure:

apiVersion: v1 kind: Secret metadata: name: llama-stack-secrets type: Opaque stringData: ENABLE_VLLM: "true" ENABLE_VERTEX_AI: "" ENABLE_OPENAI: "" ENABLE_OLLAMA: "" VLLM_URL: "_<api_endpoint>_" VLLM_API_KEY: "_<api_key>_" VLLM_MAX_TOKENS: "" VLLM_TLS_VERIFY: "" OPENAI_API_KEY: "" VERTEX_AI_PROJECT: "" VERTEX_AI_LOCATION: "" GOOGLE_APPLICATION_CREDENTIALS: "" OLLAMA_URL: "" SAFETY_MODEL: "llama-guard3:8b" SAFETY_URL: "http://localhost:#####/v1" SAFETY_API_KEY: ""

where:

ENABLE_VLLM-

Enables the vLLM provider. Set to

trueto activate. ENABLE_OPENAI-

Enables the OpenAI provider. Set to

trueto activate. ENABLE_VERTEX_AI-

Enables the Vertex AI provider. Set to

trueto activate. ENABLE_OLLAMAEnables the Ollama provider. Set to

trueto activate.NoteTo disable a provider, leave the value blank. (for example: ENABLE_VLLM="")

VLLM_URL- The API endpoint URL for the LLM provider. The provider must comply with the OpenAI API specification. Examples include OpenAI, Red Hat OpenShift AI, and vLLM.

VLLM_API_KEY- Required for remote services: Set this to the API key or token required for authentication with your remote LLM provider, if it is compatible with the OpenAI API specification.

VLLM_MAX_TOKENS- Optional. The maximum number of tokens the model generates.

VLLM_TLS_VERIFY- Optional. Specifies whether the system verifies the TLS certificate for the vLLM endpoint.

OPENAI_API_KEY- The API key required to access OpenAI models through the OpenAI API.

VERTEX_AI_PROJECT- The Google Cloud project ID required to access Gemini through Vertex AI.

VERTEX_AI_LOCATION- The Google Cloud region required to access Gemini through Vertex AI.

GOOGLE_APPLICATION_CREDENTIALS- The credentials required to authenticate with Google Cloud to access Vertex AI.

OLLAMA_URL- The URL for the Ollama service.

SAFETY_MODEL-

The identifier for the safety model. The default model is

llama-guard3:8b, which is optimized by Llama Stack for safety classification. SAFETY_URL-

The URL hosting the safety model. You must append

/v1to the host address (for example:localhost:#/v1) so the Llama Stack can correctly route moderation requests to the API. SAFETY_API_KEYThe authentication token from your safety model required to secure the moderation channel and prevent unauthorized access to your safety infrastructure.

TipTo integrate Developer Lightspeed for RHDH effectively with Model Context Protocol (MCP), you must provide the correct authorization headers. For details instructions, see {model-context-protocol-link}#proc-configuring-mpc-clients-to-access-the-rhdh-server_assembly-model-context-protocol-tools[Configuring MCP clients to access the RHDH server].

- Click Create.

Update the dynamic plugins ConfigMap: Add the Developer Lightspeed for RHDH plugin image to your existing dynamic plugins ConfigMap (

dynamic-plugins-rhdh).includes: - dynamic-plugins.default.yaml plugins: - disabled: false package: oci://ghcr.io/redhat-developer/rhdh-plugin-export-overlays/red-hat-developer-hub-backstage-plugin-lightspeed:bs_1.45.3__1.2.3 pluginConfig: dynamicPlugins: frontend: red-hat-developer-hub.backstage-plugin-lightspeed: translationResources: - importName: lightspeedTranslations module: Alpha ref: lightspeedTranslationRef dynamicRoutes: - path: /lightspeed importName: LightspeedPage mountPoints: - mountPoint: application/listener importName: LightspeedFAB - mountPoint: application/provider importName: LightspeedDrawerProvider - mountPoint: application/internal/drawer-state importName: LightspeedDrawerStateExposer config: id: lightspeed - mountPoint: application/internal/drawer-content importName: LightspeedChatContainer config: id: lightspeed priority: 100 - disabled: false package: oci://ghcr.io/redhat-developer/rhdh-plugin-export-overlays/red-hat-developer-hub-backstage-plugin-lightspeed-backend:bs_1.45.3__1.2.3Required only if your environment uses another global floating action button (FAB): You must move the existing FAB or disable it to prevent interface elements from overlapping by updating your dynamic plugin configuration file with the following changes:

- package: ./dynamic-plugins/dist/red-hat-developer-hub-backstage-plugin-bulk-import disabled: true pluginConfig: dynamicPlugins: frontend: red-hat-developer-hub.backstage-plugin-bulk-import: mountPoints: # START: If the user has an existing BulkImportPage FAB, move the FAB to the left - mountPoint: global.floatingactionbutton/config importName: BulkImportPage config: slot: 'bottom-left' # END icon: BulkImportIcon label: 'Bulk import' toolTip: 'Register multiple repositories in bulk' to: /bulk-import translationResources: - importName: bulkImportTranslations module: Alpha ref: bulkImportTranslationRef appIcons: - name: bulkImportIcon importName: BulkImportIcon dynamicRoutes: - path: /bulk-import importName: BulkImportPage menuItem: icon: bulkImportIcon text: Bulk import textKey: menuItem.bulkImportUpdate your deployment configuration: Update the deployment configuration based on how your RHDH instance was installed. You must add two sidecar containers:

llama-stackandlightspeed-core.For an Operator-installed RHDH instance (Update Backstage custom resource (CR)):

In the

spec.application.appConfig.configMapssection of your Backstage CR, add the Developer Lightspeed for RHDH custom app configuration:appConfig: configMaps: - name: lightspeed-app-configUpdate the

spec.deployment.patch.spec.template.spec.volumesspecification to include volumes for LCS configuration (lightspeed-stack), shared storage for feedback (shared-storage), and RAG data (rag-data-volume):volumes: - configMap: name: lightspeed-stack name: lightspeed-stack - emptyDir: {} name: shared-storage - emptyDir: {} name: rag-data-volumeUpdate the

spec.deployment.patch.spec.template.spec.initContainersspecification section to initialize RAG data:initContainers: - name: init-rag-data image: 'quay.io/redhat-ai-dev/rag-content:release-1.9-lcs' command: ["sh", "-c", "cp -r /rag/vector_db/rhdh_product_docs /rag-content/ && cp -r /rag/embeddings_model /rag-content/"] volumeMounts: - mountPath: /rag-content name: rag-data-volumeAdd the Llama Stack (

llama-stack) and the LCS (lightspeed-core) containers to thespec.deployment.patch.spec.template.spec.containerssection:containers: # ... Your existing RHDH container definition ... - envFrom: - secretRef: name: llama-stack-secrets image: 'quay.io/redhat-ai-dev/llama-stack:0.1.4' # Llama Stack image name: llama-stack volumeMounts: - mountPath: /app-root/.llama name: shared-storage - mountPath: /rag-content name: rag-data-volume - image: 'quay.io/lightspeed-core/lightspeed-stack:0.4.0' # Lightspeed Core Service image name: lightspeed-core volumeMounts: - mountPath: /app-root/lightspeed-stack.yaml name: lightspeed-stack subPath: lightspeed-stack.yaml - mountPath: /tmp/data/feedback name: shared-storage - mountPath: /tmp/data/transcripts name: shared-storage - mountPath: /tmp/data/conversations name: shared-storage- Click Save. The Pods are automatically restarted.

For a Helm-installed RHDH instance (Update the Helm chart):

Add your dynamic plugins configuration in the

global.dynamicproperty.global: dynamic: plugins: - package: oci://ghcr.io/redhat-developer/rhdh-plugin-export-overlays/red-hat-developer-hub-backstage-plugin-lightspeed:bs_1.45.3__1.2.3 pluginConfig: dynamicPlugins: frontend: red-hat-developer-hub.backstage-plugin-lightspeed: translationResources: - importName: lightspeedTranslations module: Alpha ref: lightspeedTranslationRef dynamicRoutes: - path: /lightspeed importName: LightspeedPage mountPoints: - mountPoint: application/listener importName: LightspeedFAB - mountPoint: application/provider importName: LightspeedDrawerProvider - mountPoint: application/internal/drawer-state importName: LightspeedDrawerStateExposer config: id: lightspeed - mountPoint: application/internal/drawer-content importName: LightspeedChatContainer config: id: lightspeed priority: 100 - package: oci://ghcr.io/redhat-developer/rhdh-plugin-export-overlays/red-hat-developer-hub-backstage-plugin-lightspeed-backend:bs_1.45.3__1.2.3Add your Developer Lightspeed for RHDH custom app config file to

extraAppConfig:extraAppConfig: - configMapRef: lightspeed-app-config filename: app-config.yamlAdd the Llama Stack Secret file to

extraEnvVarsSecrets:extraEnvVarsSecrets: - llama-stack-secretsUpdate the

extraVolumessection to include the LCS ConfigMap (lightspeed-stack), shared storage, and RAG data volume:extraVolumes: - configMap: name: lightspeed-stack name: lightspeed-stack - emptyDir: {} name: shared-storage - emptyDir: {} name: rag-data-volumeUpdate the

upstream.backstage.initContainerssection (if supported by your Helm chart structure) to initialize RAG data.initContainers: - name: init-rag-data image: 'quay.io/redhat-ai-dev/rag-content:release-1.9-lcs' command: - "sh" - "-c" - "echo 'Copying RAG data...'; cp -r /rag/vector_db/rhdh_product_docs /data/ && cp -r /rag/embeddings_model /data/ && echo 'Copy complete.'" volumeMounts: - mountPath: /data name: rag-data-volumeAdd the Llama Stack and LCS container definitions to

extraContainers.NoteIf you have Road-Core Service installed from the previous Red Hat Developer Lightspeed for Red Hat Developer Hub configuration, you must replace the older single container configuration found in source with the two sidecars.

extraContainers: # Llama Stack Container - envFrom: - secretRef: name: llama-stack-secrets image: 'quay.io/redhat-ai-dev/llama-stack:0.1.4' name: llama-stack volumeMounts: - mountPath: /app-root/.llama name: shared-storage - mountPath: /rag-content/embeddings_model name: rag-data-volume subPath: embeddings_model - mountPath: /rag-content/vector_db/rhdh_product_docs name: rag-data-volume subPath: rhdh_product_docs # Lightspeed Core Service Container - image: 'quay.io/lightspeed-core/lightspeed-stack:0.4.0' name: lightspeed-core volumeMounts: - mountPath: /app-root/lightspeed-stack.yaml name: lightspeed-stack subPath: lightspeed-stack.yaml - mountPath: /tmp/data/feedback name: shared-storage - mountPath: /tmp/data/transcripts name: shared-storage - mountPath: /tmp/data/conversations name: shared-storage- Click Save and then Helm upgrade.

Optional: Manage authorization (RBAC): If you have users who are not administrators, you must define permissions and roles for them to use Developer Lightspeed for RHDH. The Lightspeed Backend plugin uses Backstage RBAC for authorization.

For an Operator-installed RHDH instance:

Configure the required RBAC permission by defining an

rbac-policies.csvfile, includinglightspeed.chat.read,lightspeed.chat.create, andlightspeed.chat.deletepermissions:p, role:default/_<your_team>_, lightspeed.chat.read, read, allow p, role:default/_<your_team>_, lightspeed.chat.create, create, allow p, role:default/_<your_team>_, lightspeed.chat.delete, delete, allow g, user:default/_<your_user>_, role:default/_<your_team>_

Upload your

rbac-policies.csvfile to anrbac-policiesConfigMap in your OpenShift Container Platform project containing RHDH and update your Backstage CR:apiVersion: rhdh.redhat.com/v1alpha5 kind: Backstage spec: application: extraFiles: mountPath: /opt/app-root/src configMaps: - name: rbac-policies

For a Helm-installed RHDH instance:

Configure the required RBAC permission by defining an

rbac-policies.csvfile:p, role:default/_<your_team>_, lightspeed.chat.read, read, allow p, role:default/_<your_team>_, lightspeed.chat.create, create, allow p, role:default/_<your_team>_, lightspeed.chat.delete, delete, allow g, user:default/_<your_user>_, role:default/_<your_team>_

Optional: Declare policy administrators by editing your custom RHDH ConfigMap (

app-config.yaml) and adding the following code to enable selected authenticated users to configure RBAC policies through the REST API or Web UI:permission: enabled: true rbac: policies-csv-file: /opt/app-root/src/rbac-policies.csv policyFileReload: true admin: users: - name: user:default/<your_policy_administrator_name>

Verification

- Log in to your RHDH instance.

- Verify that you can see and access the Lightspeed FAB in the home page.

- Select the Lightspeed FAB and verify the chat screen loads.

5. Customize Developer Lightspeed for RHDH

You can customize Developer Lightspeed for RHDH functionalities such as gathering feedback, storing chat history in PostgreSQL, and configuring Model Context Protocol (MCP) tools.

5.1. Gather feedback in Developer Lightspeed for RHDH

Feedback collection is an optional feature configured on the LCS. This feature gathers user feedback by providing thumbs-up/down ratings and text comments directly from the chat window.

LCS collects the feedback, the user’s query, and the response of the model, storing the data as a JSON file on the local file system of the Pod. A platform administrator must later collect and analyze this data to assess model performance and improve the user experience.

The collected data resides in the cluster where RHDH and LCS are deployed, making it accessible only to platform administrators for that cluster. For data removal, users must request this action from their platform administrator, as Red Hat neither collects nor accesses this data.

Procedure

To enable feedback collection, in the LCS configuration file (

lightspeed-stack.yaml), add the following settings:user_data_collection: feedback_enabled: true feedback_storage: "/tmp/data/feedback" transcripts_enabled: true transcripts_storage: "/tmp/data/transcripts"To disable feedback collection, in the LCS configuration file (

lightspeed-stack.yaml), add the following settings:user_data_collection: feedback_enabled: false feedback_storage: "/tmp/data/feedback" transcripts_enabled: true transcripts_storage: "/tmp/data/transcripts"

5.2. Update the system prompt in Developer Lightspeed for RHDH

You can customize the system prompt that Developer Lightspeed for RHDH uses to frame queries to your LLM, refining the context and instructions that the LLM receives to improve response relevance for your environment.

Procedure

To set a custom system prompt, in your Developer Lightspeed for RHDH app config file, add or modify the

lightspeed.systemPromptkey and set its value to your preferred prompt string as shown in the following example:lightspeed: # ... other lightspeed configurations systemPrompt: "You are a helpful assistant focused on Red Hat Developer Hub development."

Set

systemPromptto prefix all queries sent by Developer Lightspeed for RHDH to the LLM with this instruction, guiding the model to generate more tailored responses.

5.3. Customize the chat history storage in Developer Lightspeed for RHDH

By default, Developer Lightspeed for RHDH stores chat history in a non-persistent local database within the LCS container. You can configure Developer Lightspeed for RHDH to use PostgreSQL for persistent chat history storage.

Configuring Developer Lightspeed for RHDH to use PostgreSQL records prompts and responses, which platform administrators can review. You must assess any data privacy and security implications if user chat history contains private, sensitive, or confidential information. For users that want to have their chat data removed, they must request their platform administrator to perform this action. Red Hat does not collect or access this chat history data.

Procedure

Configure the chat history storage type in the LCS configuration file (

lightspeed-stack.yaml) using any of the relevant options:To enable persistent storage with PostgreSQL, add the following configuration:

conversation_cache: type: postgres postgres: host: _<your_database_host>_ port: _<your_database_port>_ db: _<your_database_name>_ user: _<your_user_name>_ password: _<postgres_password>_To retain the default, non-persistent SQLite storage, make sure the configuration is set as shown in the following example:

conversation_cache: type: "sqlite" sqlite: db_path: "/tmp/data/conversations/lcs_cache.db"

- Restart your LCS service to apply the new configuration.

5.3.1. Enable secure AI research with Developer Lightspeed Notebooks

As an administrator, configure Red Hat Developer Hub and Red Hat Developer Lightspeed for Red Hat Developer Hub to provide users with private, document-based AI workspaces.

Prerequisites

- A deployed instance of RHDH.

- A Lightspeed Stack service is running and accessible to the backend.

- A supported large language model (LLM), such as Granite 7B or higher, is available.

Procedure

Enable the notebook feature and define your model by adding the following configuration to your

app-config.yamlfile:lightspeed: notebooks: enabled: true queryDefaults: model: ${NOTEBOOKS_QUERY_MODEL} # Use the exact model name provider_id: ${NOTEBOOKS_QUERY_PROVIDER_ID}NoteIf the model name is incorrect, an error message appears in the logs and the user interface.

Grant user access through role-based access control (RBAC) policies by defining permissions in your

rbac-policy-csvfile:Add the permission policy:

p, role:default/_<your_team_name>_, lightspeed.notebooks.use, update, allow

Assign the role to specific users:

g, user:default/_<your_user_name>_, role:default/_<your_team_name>_

- Save your files and restart the Developer Hub service to apply the configuration changes.

Verification

- Log in to RHDH using an account assigned to the RBAC role defined in the configuration.

- Confirm that the Notebooks tab is visible next to the Chat tab in the primary navigation bar.

- Click the Notebooks tab and ensure the My Notebooks dashboard loads without error messages.

6. Solve project-specific challenges with Developer Lightspeed for RHDH Notebooks

Use Developer Lightspeed for RHDH Notebooks to research, troubleshoot, and analyze projects by using a large language model (LLM) grounded in your own documentation. Notebooks use Retrieval-Augmented Generation (RAG) to ensure that responses are based strictly on the files you upload.

This section describes Developer Preview features in the Developer Lightspeed for RHDH Notebooks plugin. Developer Preview features are not supported by Red Hat in any way and are not functionally complete or production-ready. Do not use Developer Preview features for production or business-critical workloads. Developer Preview features provide early access to functionality in advance of possible inclusion in a Red Hat product offering. Customers can use these features to test functionality and provide feedback during the development process. Developer Preview features might not have any documentation, are subject to change or removal at any time, and have received limited testing. Red Hat might provide ways to submit feedback on Developer Preview features without an associated SLA.

For more information about the support scope of Red Hat Developer Preview features, see Developer Preview Support Scope.

Use Notebooks to achieve the following goals:

- Query your documentation: Upload project files to ask questions, summarize content, or brainstorm ideas based on those specific documents.

- Troubleshoot with project-specific context: Upload project logs, architecture diagrams, or onboarding files to receive technical answers tailored to your specific environment.

- Securely analyze private data: Conduct research in isolated sessions. Your uploaded data and chat history remain private and are inaccessible to other users.

- Run multiple Notebooks: Uploaded documents and chat history remain available and are re-opened through the Notebook dashboard.

- Verify AI responses with citations: Use the Sources chips to view the exact document excerpts used to generate an answer.

- Organize research: Use metadata and tagging to categorize different research topics.

The following constraints apply during the Developer Preview:

- Data boundaries: The AI can only access data within the active Notebook session.

- Private access: You cannot share notebooks or documents with other team members.

- Manual uploads: You must upload files directly. The tool does not support URL ingestion or web scraping.

- Ephemeral defaults: Without a configured Persistent Volume (PV), all Notebook data and uploaded files are lost upon service restart.

6.1. Enable secure AI research with Developer Lightspeed Notebooks

As an administrator, configure Red Hat Developer Hub and Red Hat Developer Lightspeed for Red Hat Developer Hub to provide users with private, document-based AI workspaces.

Prerequisites

- A deployed instance of RHDH.

- A Lightspeed Stack service is running and accessible to the backend.

- A supported large language model (LLM), such as Granite 7B or higher, is available.

Procedure

Enable the notebook feature and define your model by adding the following configuration to your

app-config.yamlfile:lightspeed: notebooks: enabled: true queryDefaults: model: ${NOTEBOOKS_QUERY_MODEL} # Use the exact model name provider_id: ${NOTEBOOKS_QUERY_PROVIDER_ID}NoteIf the model name is incorrect, an error message appears in the logs and the user interface.

Grant user access through role-based access control (RBAC) policies by defining permissions in your

rbac-policy-csvfile:Add the permission policy:

p, role:default/_<your_team_name>_, lightspeed.notebooks.use, update, allow

Assign the role to specific users:

g, user:default/_<your_user_name>_, role:default/_<your_team_name>_

- Save your files and restart the Developer Hub service to apply the configuration changes.

Verification

- Log in to RHDH using an account assigned to the RBAC role defined in the configuration.

- Confirm that the Notebooks tab is visible next to the Chat tab in the primary navigation bar.

- Click the Notebooks tab and ensure the My Notebooks dashboard loads without error messages.

6.2. Enable data persistence for Developer Lightspeed Notebooks

To persist Notebook sessions, documents, and AI history across service restarts, as an administrator, you must configure the Notebooks storage backends to use persistent volumes.

By default, the service uses ephemeral storage in the /tmp directory, which the system clears during a pod restart.

Prerequisites

- You have administrator privileges to the RHDH instance.

-

You have access to the configuration file (

llama-stack-configs/config.yaml). -

A Persistent Volume Claim (PVC) is provisioned in your cluster and mounted to the container (for example, at

/var/lib/lightspeed-data).

Procedure

- Create a custom ConfigMap to store your configuration changes and reference it in your deployment. You must use a custom ConfigMap to ensure changes persist during Operator reconciliation or Helm upgrades.

Modify storage paths: In your custom ConfigMap, update the

llama-stack-configs/config.yamlfile to point thekv_notebooksstorage backend to your persistent mount point:spec: initContainers: - name: init-notebooks-dir # ... complete init container containers: - name: lightspeed-core image: quay.io/lightspeed-core/lightspeed-stack:0.5.1 ports: - containerPort: 8080 volumeMounts: - name: notebooks-storage mountPath: /var/lib/lightspeed-data - name: config # ← Added all ConfigMap mounts mountPath: /app-root/config.yaml subPath: config.yaml - name: lightspeed-config mountPath: /app-root/lightspeed-stack.yaml subPath: lightspeed-stack.yaml - name: profile mountPath: /app-root/rhdh-profile.py subPath: rhdh-profile.py livenessProbe: # ← Added health checks httpGet: path: /readiness port: 8080 readinessProbe: httpGet: path: /readiness port: 8080 volumes: # ← Added all volume definitions - name: notebooks-storage persistentVolumeClaim: claimName: lightspeed-notebooks-pvc - name: config configMap: name: llama-stack-config - name: lightspeed-config configMap: name: lightspeed-core-config - name: profile configMap: name: rhdh-profileUpdate volume mounts: Update your deployment manifest to include the init container, volume mounts, and volume definitions:

spec: template: spec: initContainers: - name: init-notebooks-storage image: registry.access.redhat.com/ubi9/ubi-minimal command: ["sh", "-c", "mkdir -p /var/lib/lightspeed-data/notebooks && chmod -R 777 /var/lib/lightspeed-data/notebooks"] volumeMounts: - name: lightspeed-notebooks mountPath: /var/lib/lightspeed-data containers: - name: lightspeed-stack image: quay.io/lightspeed-core/lightspeed-stack:0.5.1 ports: - containerPort: 8080 volumeMounts: - name: lightspeed-notebooks mountPath: /var/lib/lightspeed-data - name: config mountPath: /app-root/config.yaml subPath: config.yaml livenessProbe: httpGet: path: /readiness port: 8080 readinessProbe: httpGet: path: /readiness port: 8080 volumes: - name: lightspeed-notebooks persistentVolumeClaim: claimName: lightspeed-notebooks-pvc - name: config configMap: name: llama-stack-config- Apply the updated configuration and restart the service.

Verification

- In Red Hat Developer Hub, create a Notebook and upload a test document.

- Send a message to the virtual assistant and verify that the response is based on the document.

Restart the pod:

$ oc delete pod <pod_name>

- After the pod recovers, refresh the My Notebooks dashboard.

- Verify that the Notebook and the uploaded file are still accessible.

7. Get AI-assisted help for your development tasks

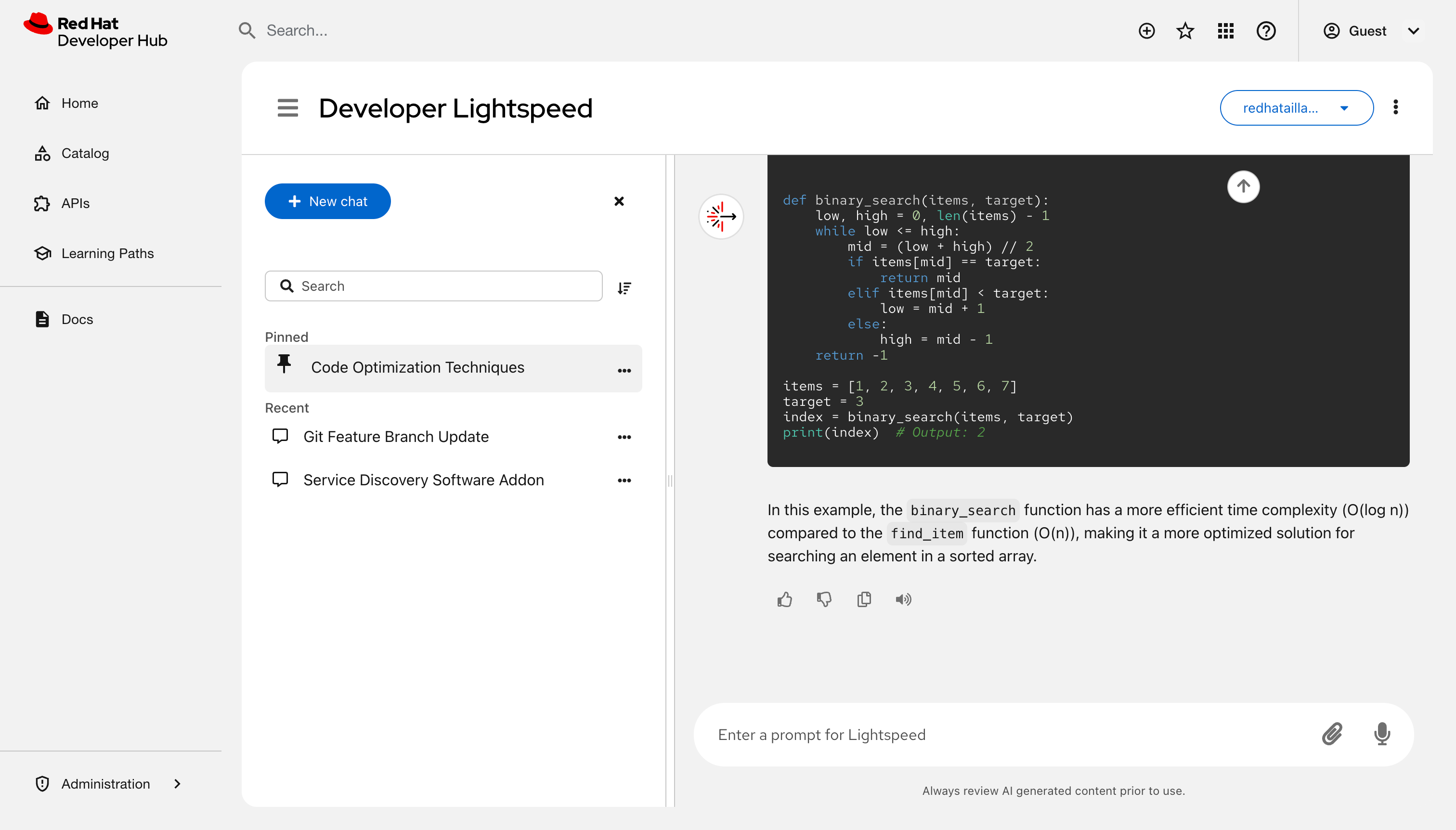

Use Red Hat Developer Lightspeed for Red Hat Developer Hub, a generative AI assistant in Red Hat Developer Hub (RHDH), to ask platform questions, analyze logs, generate code, and create test plans from a conversational interface.

7.1. Prerequisites

- Your platform engineer has installed and configured the Developer Lightspeed for RHDH plugin in your RHDH instance.

7.2. Configure safety guards in Red Hat Developer Hub

To protect users from insecure or harmful AI model outputs, Red Hat Developer Hub (RHDH) uses Llama Guard as a default safety shield. You must configure these guards to align with your organization’s security policies.

Default safety guard configuration-

The system uses Llama Guard as the default safety shield. Override these settings in the

run.yamlfile.

The external_providers_dir parameter defaults to null and is no longer required in your configuration.

Overriding safety guards-

To implement custom security layers or different safety shields, you must define a new safety provider within a custom

run.yamlfile. Disabling safety guards-

To run RHDH without safety guards, you must use the

run-no-guard.yamlconfiguration file.

Running without safety guards increases the risk of invalid model output. Only use this configuration in secure development environments.

Applying the no-guard configuration- To run the system without a safety guard, perform these steps:

Procedure

Add the following YAML file as a config map to your namespace:

version: 2 image_name: redhat-ai-dev-llama-stack-no-guard apis: - agents - inference - safety - tool_runtime - vector_io - files container_image: external_providers_dir: providers: agents: - config: persistence: agent_state: namespace: agents backend: kv_default responses: table_name: responses backend: sql_default provider_id: meta-reference provider_type: inline::meta-reference inference: - provider_id: ${env.ENABLE_VLLM:+vllm} provider_type: remote::vllm config: url: ${env.VLLM_URL:=} api_token: ${env.VLLM_API_KEY:=} max_tokens: ${env.VLLM_MAX_TOKENS:=4096} tls_verify: ${env.VLLM_TLS_VERIFY:=true} - provider_id: ${env.ENABLE_OLLAMA:+ollama} provider_type: remote::ollama config: url: ${env.OLLAMA_URL:=http://localhost:11434} - provider_id: ${env.ENABLE_OPENAI:+openai} provider_type: remote::openai config: api_key: ${env.OPENAI_API_KEY:=} - provider_id: ${env.ENABLE_VERTEX_AI:+vertexai} provider_type: remote::vertexai config: project: ${env.VERTEX_AI_PROJECT:=} location: ${env.VERTEX_AI_LOCATION:=us-central1} - provider_id: sentence-transformers provider_type: inline::sentence-transformers config: {} tool_runtime: - provider_id: model-context-protocol provider_type: remote::model-context-protocol config: {} - provider_id: rag-runtime provider_type: inline::rag-runtime config: {} vector_io: - provider_id: faiss provider_type: inline::faiss config: persistence: namespace: vector_io::faiss backend: faiss_kv files: - provider_id: localfs provider_type: inline::localfs config: storage_dir: /tmp/llama-stack-files metadata_store: table_name: files_metadata backend: sql_files storage: backends: kv_default: type: kv_sqlite db_path: /tmp/kvstore.db sql_default: type: sql_sqlite db_path: /tmp/sql_store.db sql_files: type: sql_sqlite db_path: /rag-content/vector_db/rhdh_product_docs/1.9/files_metadata.db faiss_kv: type: kv_sqlite db_path: /rag-content/vector_db/rhdh_product_docs/1.9/faiss_store.db stores: metadata: namespace: registry backend: faiss_kv inference: table_name: inference_store backend: sql_default max_write_queue_size: 10000 num_writers: 4 conversations: table_name: openai_conversations backend: sql_default registered_resources: models: - model_id: sentence-transformers/all-mpnet-base-v2 metadata: embedding_dimension: 768 model_type: embedding provider_id: sentence-transformers provider_model_id: /rag-content/embeddings_model tool_groups: - provider_id: rag-runtime toolgroup_id: builtin::rag vector_dbs: - vector_db_id: rhdh-product-docs-1_8 embedding_model: sentence-transformers/all-mpnet-base-v2 embedding_dimension: 768 provider_id: faiss server: auth: host: port: 8321 quota: tls_cafile: tls_certfile: tls_keyfile:Mount the config map to your Llama Stack container at

/app-root/run.yamlto make sure it overrides the default image file:name: llama-stack volumeMounts: - mountPath: /app-root/run.yaml subPath: run.yaml name: llama-stack-config

Configure the required volume:

volumes: - name: llama-stack-config configMap: name: llama-stack-configwhere:

llama-stack-config- The config map where you added the new no-guard configuration file.

- Restart the deployment if it does not trigger an automatic rollout.

7.3. Best results for assistant queries

To resolve technical blockers and accelerate development tasks, you must structure your queries to give specific context to the AI assistant. Using precise prompts makes sure that Developer Lightspeed for RHDH generates relevant code snippets, architectural advice, or platform-specific instructions.

Use the following strategies to improve the accuracy of the assistant’s output during your development workflow:

- Specify technologies

- Instead of asking "How do I use templates?", ask "How do I create a Software Template that scaffolds a Node.js service with a CI/CD pipeline".

- Give context

- Include details about your environment, such as "I am deploying to OpenShift; how do I set up my catalog-info.yaml to show pod health?".

- Use conversation context

- Ask follow-up questions to refine an earlier answer. For example, if the assistant gives a code snippet, you can ask "Now rewrite that using TypeScript interfaces."

- Validate with citations

- Check the provided documentation links and citations in the response to verify that the generated advice aligns with your organization’s official standards.

- Improve assistant accuracy

- Rate the utility of responses by selecting the Thumbs up or Thumbs down icons. This feedback helps tune the model for your organization’s specific requirements.

To keep your data secure, do not include sensitive personal information, plain text credentials, or confidential business data in your queries.

7.4. AI response monitoring and context management

Developer Lightspeed for RHDH provides features to track the AI reasoning process and keep the context of your development tasks.

- Thinking cards

- An expandable thinking card is displayed while the AI processes a query. A pulse animation indicates the reasoning phase. You can expand the card to view detailed reasoning or collapse it to minimize screen clutter.

- Tool call transparency

- An expandable card displays details for Model Context Protocol (MCP) tool calls, which you can use to monitor background processes.

- Context-aware citations

- Retrieval-Augmented Generation (RAG) citations appear only when the AI uses internal documentation. This makes sure that general knowledge responses remain concise.

- Context preservation during model changes

- When you select a different AI model, Developer Lightspeed for RHDH starts a new conversation. This keeps your earlier chats available in your history.

- Structural readability

- The interface formats headings and bullet points automatically to make sure responses are scannable.

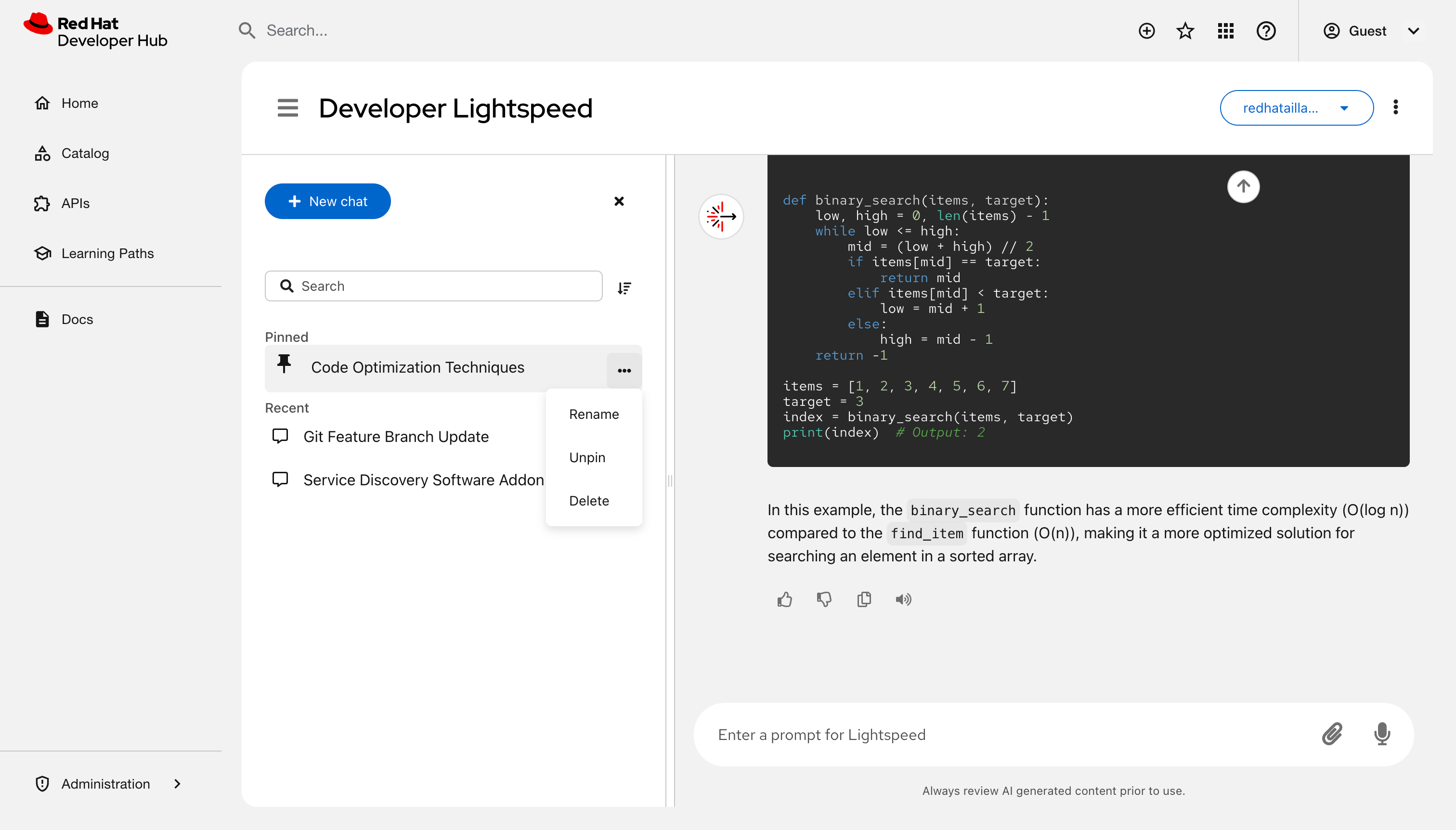

7.5. Manage chats

Manage your chat history and configuration in RHDH to organize your workspace, resume earlier tasks, or find past solutions.

Prerequisites

- You have configured the Developer Lightspeed for RHDH plugin in Red Hat Developer Hub.

- You have logged in to the portal.

Procedure

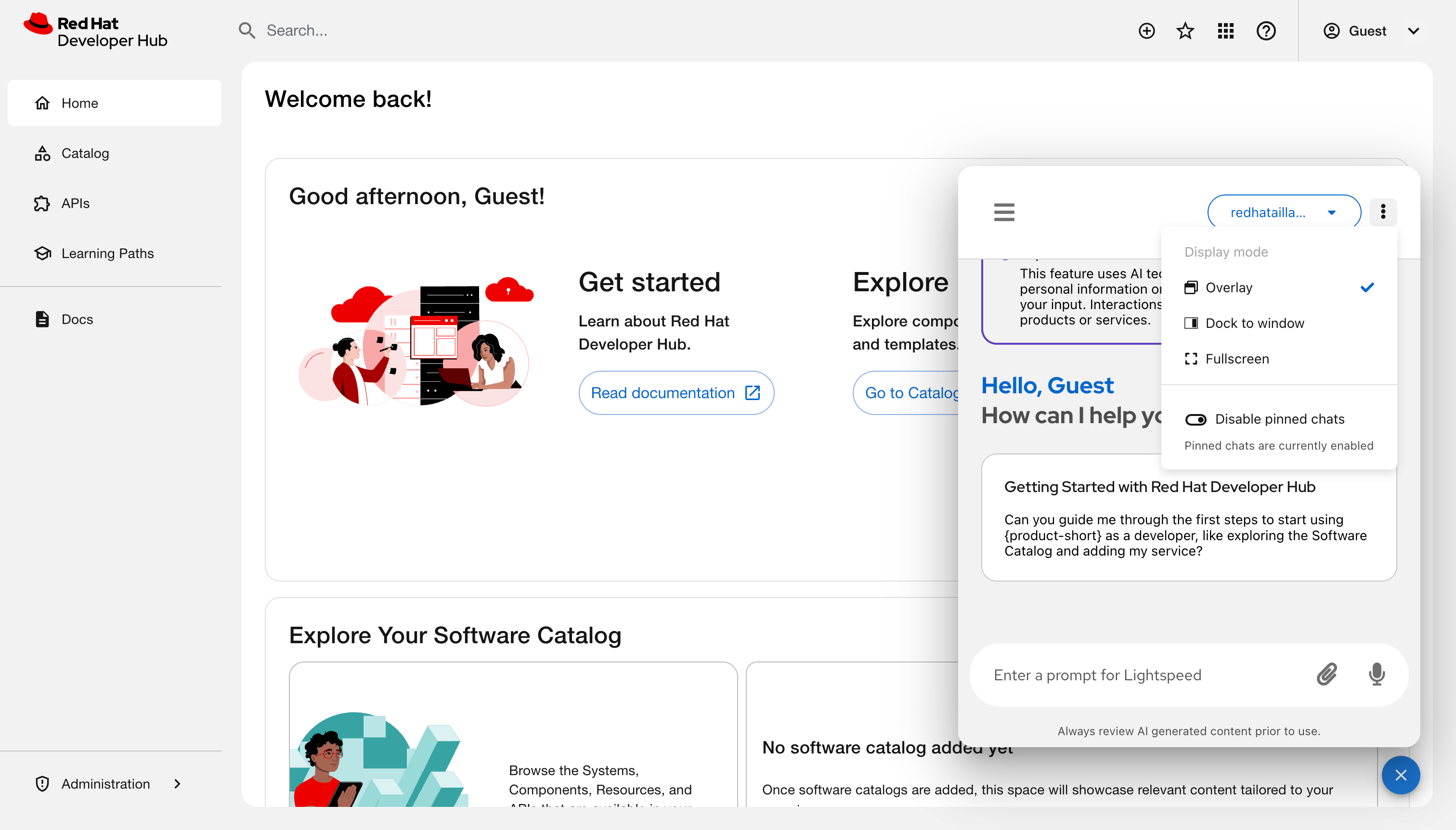

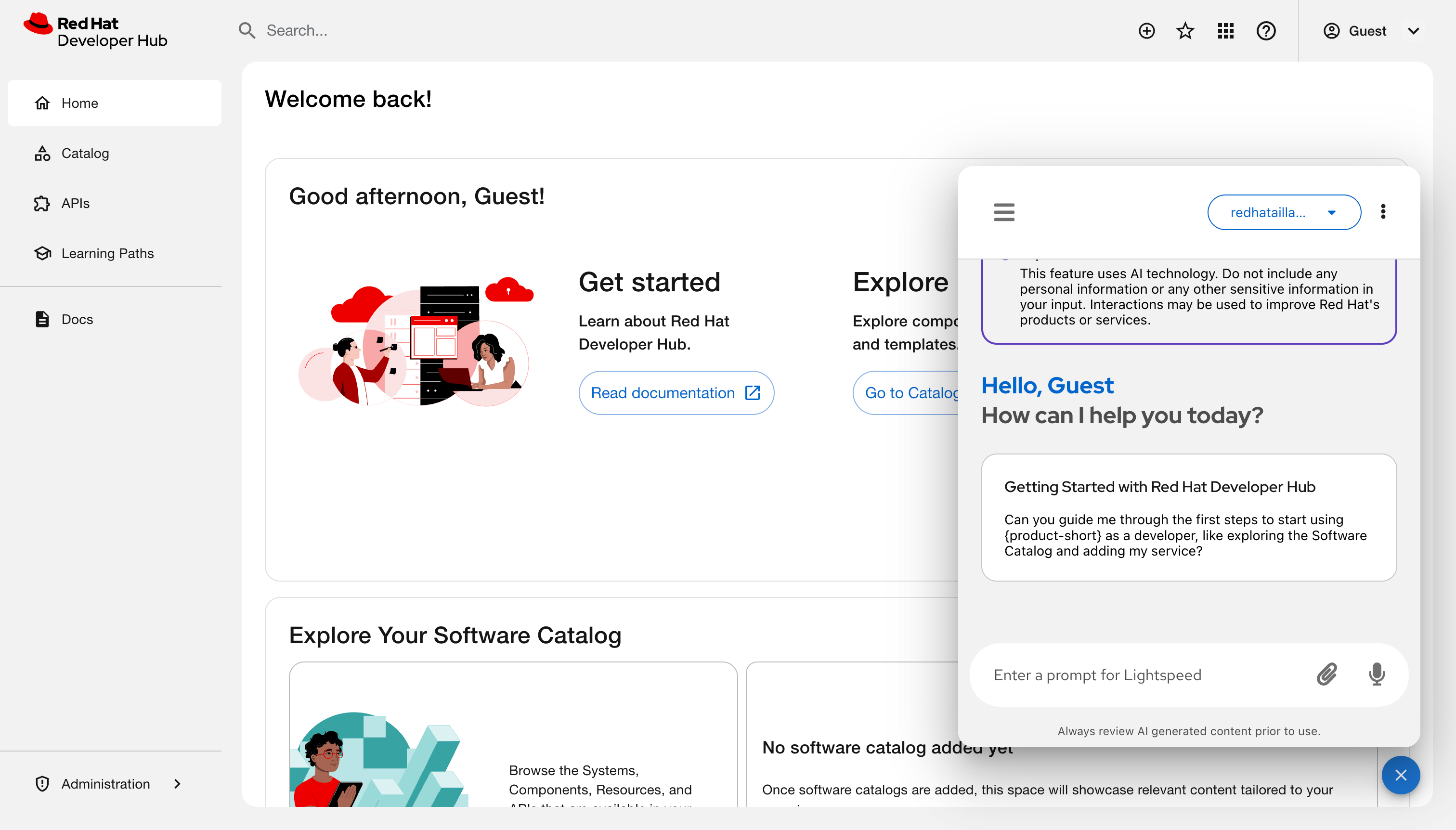

- Click the Lightspeed floating action button (FAB) at the lower right of the screen to open the chat overlay.

Optional: Configure the interface display and server settings:

- Click the Chatbot options icon (⋮) to view chat history or start a new chat.

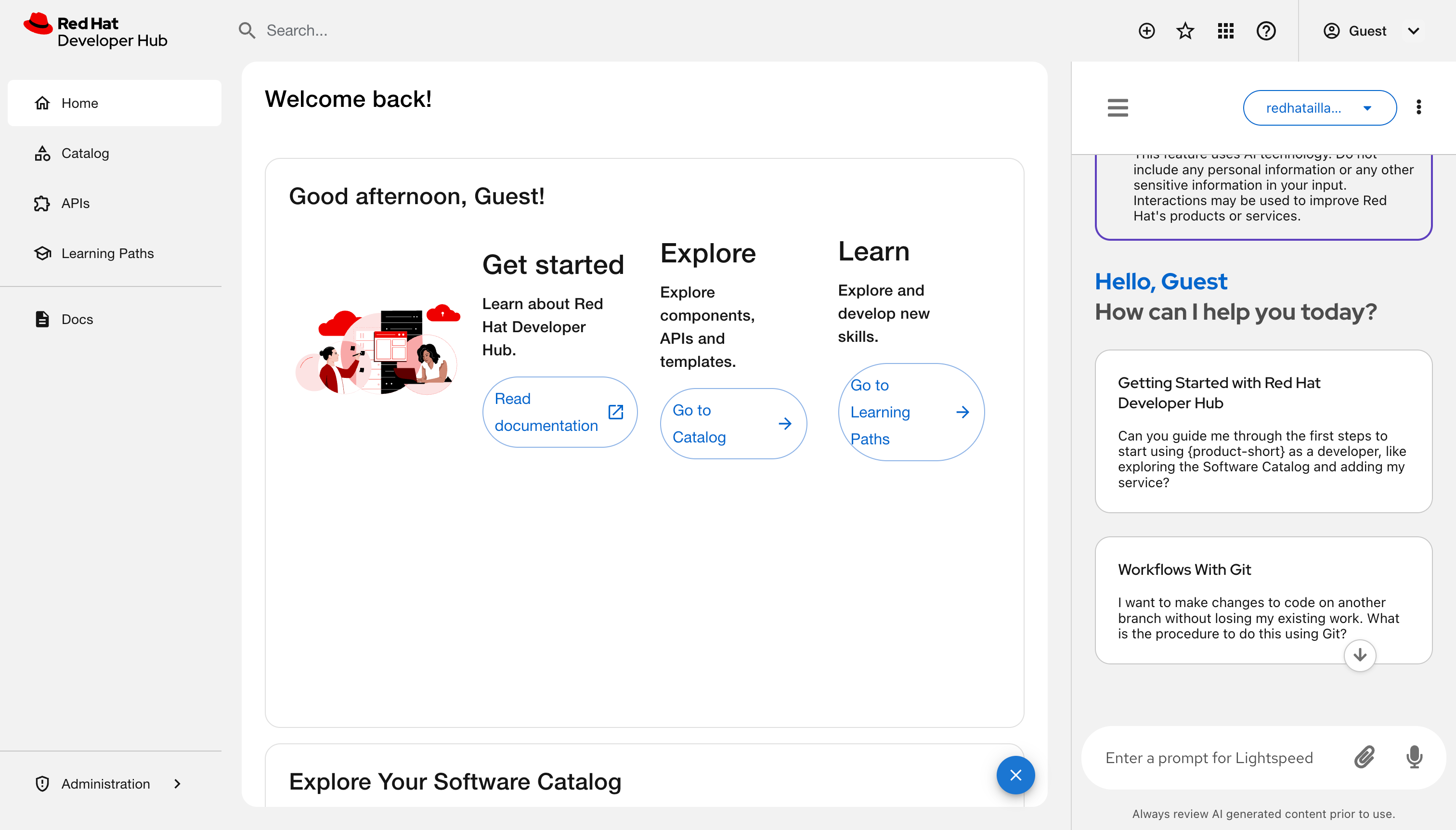

Click the Display mode icon and select any of the following views:

Overlay: A floating window is displayed over the current page content.

Dock to window: A panel attaches to the right side of the screen. Activating this mode automatically closes the quick start panel if it is already open.

Fullscreen: A dedicated page opens for intensive chat sessions. You can bookmark this URL for direct access.

- Optional: Toggle Enable pinned chats/Disable pinned chats to enable or hide the pinned chats. The system enables this option by default.

- Available only if MCP is configured: MCP settings: Manage Model Context Protocol connections.

Start a chat or load an earlier session:

- Enter a prompt: Type a query in the Enter a prompt for Lightspeed chat field and press Enter.

- Use a sample: Click a prompt tile, such as Deploy with Tekton.

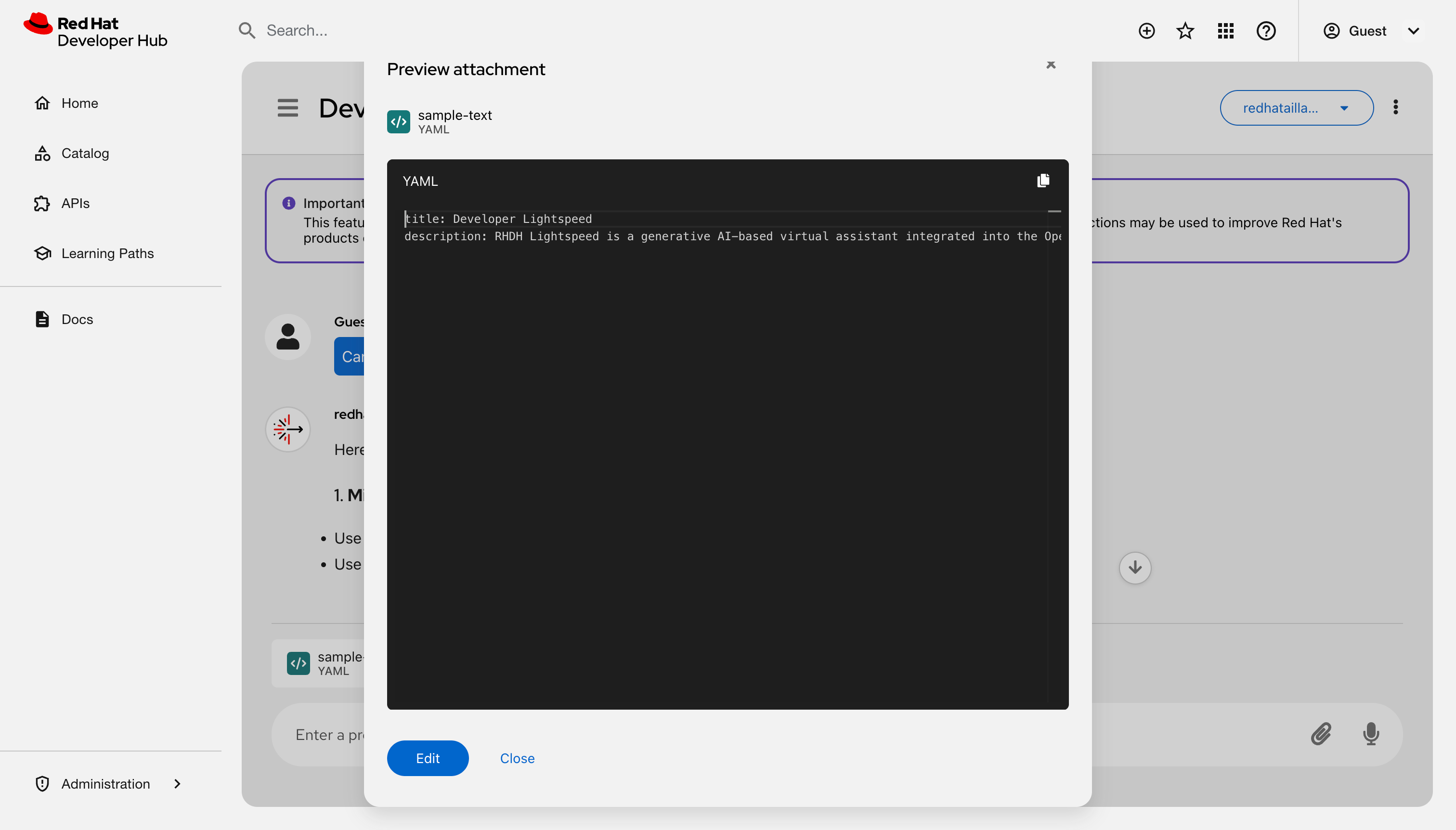

Attach a file: Click Attach to upload a

.yaml,.json, or.txtfile.- Click the file name to open the preview model.

View or edit the content of the file:

- Use voice: Click the Use microphone icon.

- Resume a chat: Select a title from the Recent list.

Organize your chat history:

- Start a new topic: Click New chat to reset the assistant’s context.

- Search history: Enter a keyword in the Search field.

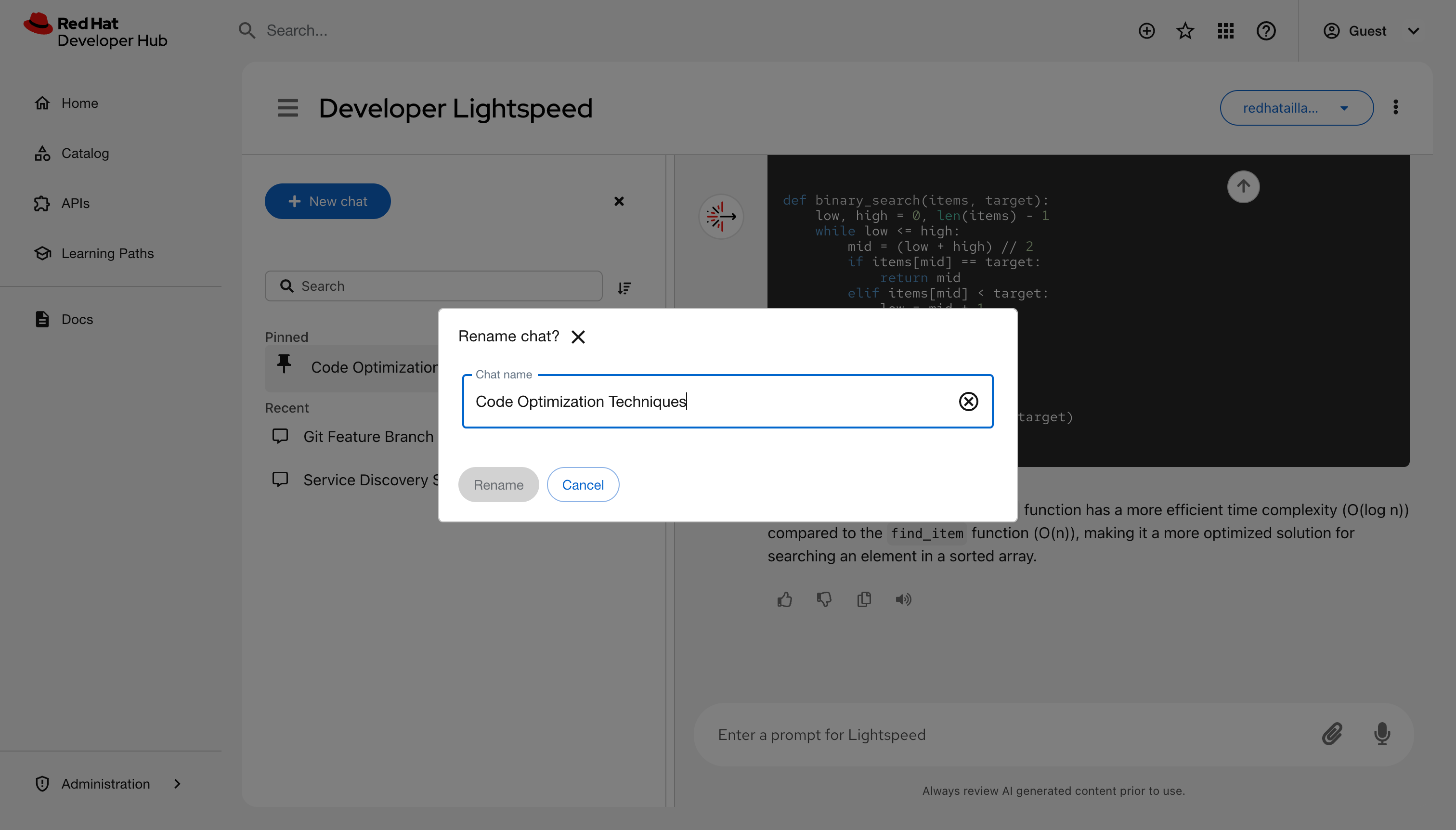

Rename a session: Click Options next to a chat title, select Rename, and enter a new name.

- Pin a chat:: Click Options next to a chat title and select Pin. The chat moves to the Pinned group.

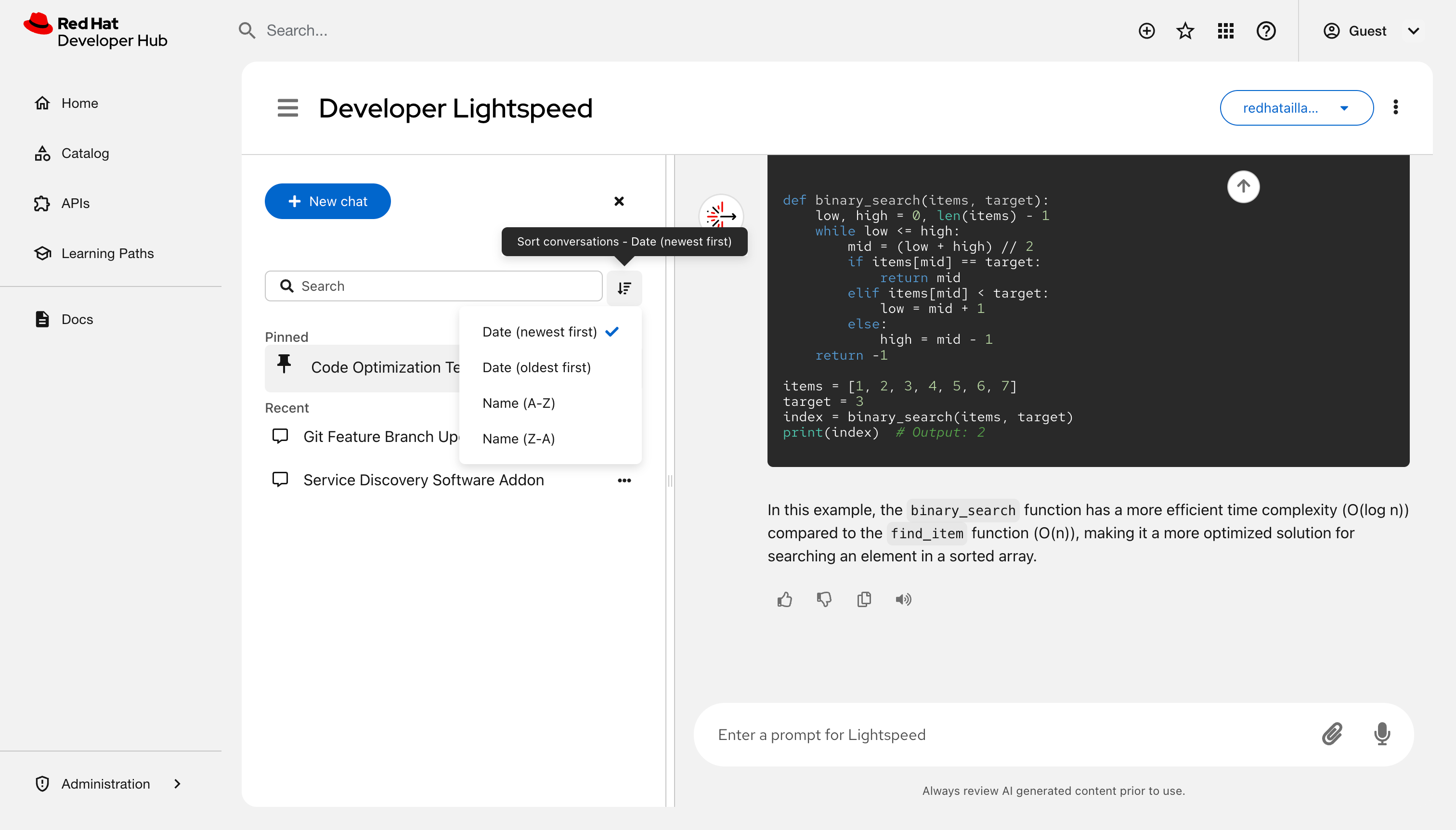

Sort chats:: Click Sort control and choose a sorting criteria, such as Date (Newest first).

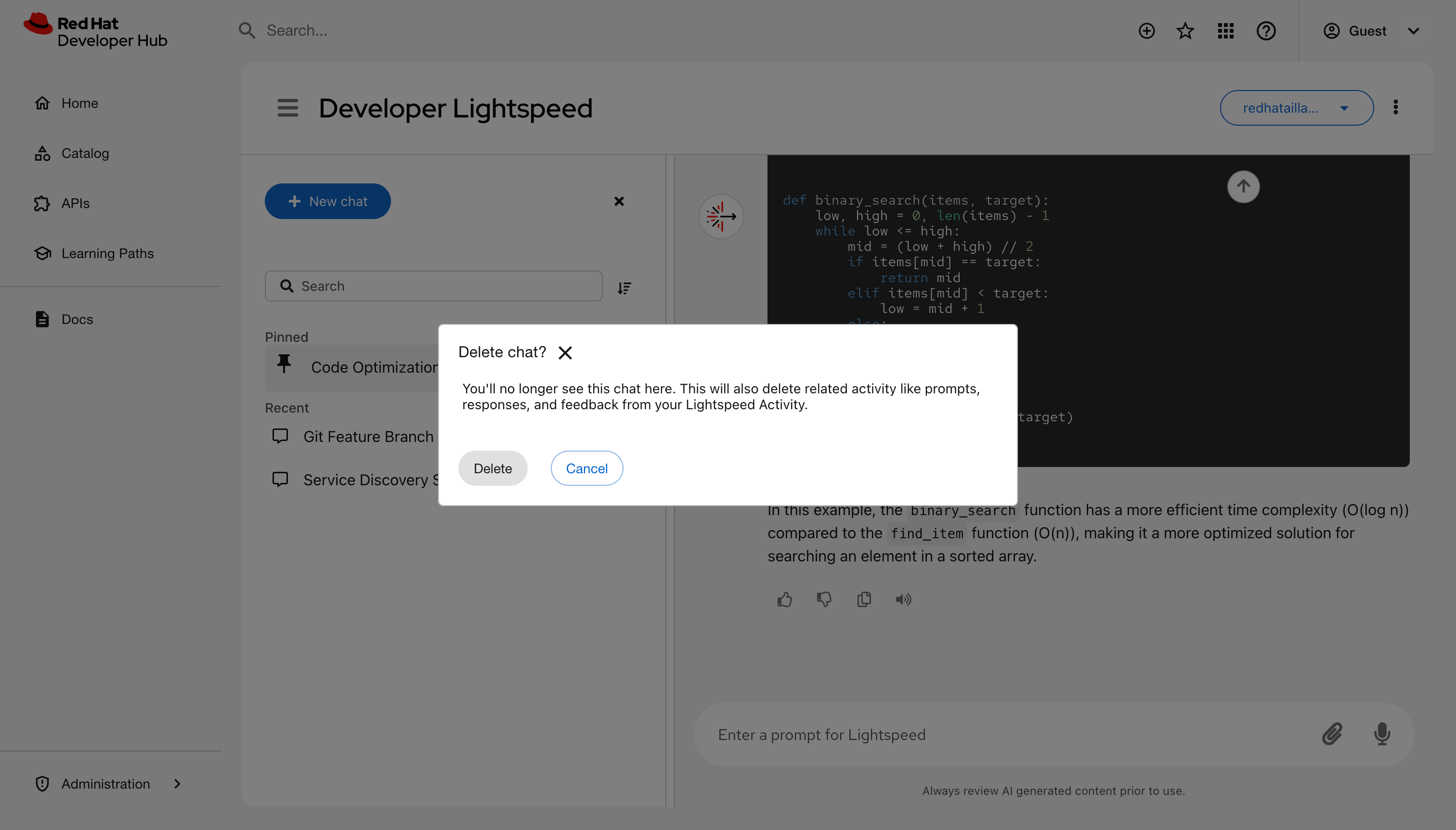

Delete a chat: Click Options next to a chat title and select Delete.

- Hide the chat history section and reduce visual noise: Select the Close icon (x) next to New chat.

- Restore access to your pinned chat: Select the Chat history menu icon.

- Optional: To hide the interface, if you are in the Overlay or Dock to window mode, click the Close Lightspeed icon (X) to hide the window. If you are in Fullscreen mode, revert to the other modes and click the Close Lightspeed icon (X). The system preserves your active query and history.

- Optional: In Fullscreen mode, bookmark the URL in your browser to save a direct link to the chat interface.

Verification

- The main window displays the active chat or selected history.

- The chat history list reflects renamed, pinned, or deleted entries.

7.6. Build a private knowledge base with Developer Lightspeed for RHDH Notebooks

Use Developer Lightspeed for RHDH notebooks to create isolated research environments. These workspaces allow you to analyze project data securely by using a large language model (LLM) grounded in your specific documentation.

This section describes Developer Preview features in the Developer Lightspeed for RHDH Notebooks plugin. Developer Preview features are not supported by Red Hat in any way and are not functionally complete or production-ready. Do not use Developer Preview features for production or business-critical workloads. Developer Preview features provide early access to functionality in advance of possible inclusion in a Red Hat product offering. Customers can use these features to test functionality and provide feedback during the development process. Developer Preview features might not have any documentation, are subject to change or removal at any time, and have received limited testing. Red Hat might provide ways to submit feedback on Developer Preview features without an associated SLA.

For more information about the support scope of Red Hat Developer Preview features, see Developer Preview Support Scope.

7.6.1. Create isolated research workspaces

Organize your work into individual notebook sessions to keep research topics separate and private.

Procedure

- In the RHDH interface, click the Open Lightspeed floating action button (FAB).

- In your Developer Lightspeed page, select the Notebooks tab.

- Click Create a new notebook to start a new workspace.

- Optional: To manage your workspaces, click the More options icon on a notebook card to Rename, Delete, or add Tags to the session.

Verification

- Confirm the new notebook card appears on the My Notebooks dashboard.

7.6.2. Provide project context to the AI

To receive answers tailored to your project, you must upload relevant source material to your active session.

Procedure

- Open a Notebook card from the dashboard.

Add resources by using one of the following methods:

- In the sidebar, click the Add (+) icon.

- In the center of the user interface, click Upload a resource.

Select your sources from your local system or the web:

-

Local files: Upload

.txt,.md,.pdf,.docx,.log,.yaml, or.jsonfiles. - Web content: Enter a URL to ingest web-based content.

-

Local files: Upload

Adhere to the following constraints:

- File size: Individual files or URL content must be 20MB or smaller.

- Notebook Capacity: The total token count per session must not exceed 100k.

- Unsupported content: Avoid scanned PDF images without text, audio, video, and general image files.

- Persistence requirement: The internal SQL and KV stores must be mapped to a persistent backend to maintain the 100k token context across sessions.

- Wait for the system to process and vectorize the files. This might take several seconds for larger PDFs.

Verification

-

Ensure the uploaded files appear in the Resources list in the sidebar with a

Processedstatus.

7.6.3. Extract and verify document-based insights

After providing context, use the AI to perform reasoning across your files and verify the accuracy of the responses.

Procedure

- Enter a question in the prompt bar at the bottom of the screen.

Analyze the response. The AI identifies relationships across all uploaded documents and URLs in the session.

NoteIf no documents are uploaded, you cannot communicate with the AI.

- To verify accuracy, click the Sources chip to view the specific document excerpts used to generate the answer.

- Manage your workflow by using the history panel in the sidebar to expand or collapse previous interactions.

Verification

- Confirm that the Sources panel displays the correct filenames and text snippets corresponding to the AI’s response.

8. AI model evaluation data to select the right AI model

Use the Red Hat Developer Lightspeed for Red Hat Developer Hub evaluation framework to validate the performance, accuracy, and reliability of Developer Lightspeed for RHDH.

With this automated toolset, you can measure how effectively various large language models (LLMs) answer questions based on Red Hat Developer Hub documentation.

Table 1. Components of the evaluation framework

| Component | Description |

|---|---|

|

Evaluation framework |

Contains the core logic and scripts used to run evaluations. |

|

Datasets |

Includes the input files used to test the model. |

|

Evaluation metrics integration |

Provides scoring through various metrics, including Ragas, DeepEval, and custom metrics. Ragas is the primary metric used to validate Developer Lightspeed for RHDH performance. |

8.1. Configure the evaluation environment to validate model accuracy

Set up the evaluation environment to validate the performance and accuracy of Developer Lightspeed for RHDH. Configure this evaluation to ensure the model correctly interprets documentation and provides dependable answers.

By performing these evaluations, you minimize the risk of the model delivering incorrect or hallucinated information to users in production.

Prerequisites

- Install uv for Python package management (Python 3.11 or later).

Procedure

Clone the evaluation repository and navigate to the directory:

git clone https://github.com/lightspeed-core/lightspeed-evaluation cd lightspeed-evaluation

Synchronize the environment and install dependencies:

uv sync

Configure the environment variables for the judge LLM. You can create a

.envfile in the root directory or export the keys directly to your terminal.If you use Gemini, you must set the Gemini API key:

export GEMINI_API_KEY="your-google-api-key"

If you use OpenAI, you must set the OpenAI API key:

export OPENAI_API_KEY="your-key"

Optional: If you test with a live service, set your Developer Lightspeed for RHDH service API key:

export API_KEY="your-lightspeed-service-key"

Verification

Verify that the environment is synchronized and the virtual environment is active:

uv run python --version

The output must return Python 3.11 or later.

8.2. Prepare evaluation datasets to verify AI-generated responses

Prepare evaluation datasets to test the performance of Developer Lightspeed for RHDH. You can use pre-generated AI datasets for specific Red Hat Developer Hub releases or generate custom AI datasets from your own documentation.

Prerequisites

- You must clone the evaluation repository to your local machine.

Procedure

Download pre-generated datasets: Use this method to test the performance of specific RHDH releases. These datasets are generated using Ragas testset generation for RAG.

- In your terminal, navigate to the /dataset folder in the evaluation repository.

-

Locate the

.evaluation_dataset_yamlfiles. These files are pre-configured for the evaluation tool. To test a historical release, switch to the corresponding branch.

For example, to access the Red Hat Developer Hub 1.8 dataset, switch to the

1.8branch.ImportantThe

mainbranch contains work-in-progress (WIP) datasets. Avoid using this branch for stable evaluations.

Generate custom datasets: Use this method to create a new test set from your own technical documentation.

- Generate a diverse set of question-and-answer (Q&A) pairs by following the Ragas test data generation documentation.

- Ensure your Q&A pairs match the required format by reviewing the evaluation data structure configuration.

Verification

- Verify that your custom dataset matches the required schema before you start the evaluation run.

8.3. Run performance tests to ensure AI response reliability

Use the evaluation framework to run performance tests in either static mode to evaluate pre-recorded responses or dynamic mode to call a live service.

These evaluations identify performance gaps, allow you to compare different large language models (LLMs), and ensure that Developer Lightspeed for RHDH provides reliable information to users.

Prerequisites

- You must install and configure the evaluation environment.

- You must prepare an evaluation dataset.

Procedure

-

Download the

system.yamlconfiguration template from the repository. Configure the parameters in the

system.yamlfile based on your evaluation mode:Field Description llmDefines the judge LLM that scores the responses, such as

gemini-2.5-pro.api.enabledSet to

falsefor static mode to use pre-filled data. Set totruefor dynamic mode to call a live service.api.api_base(Required for dynamic mode only) Provide the URL of your Developer Lightspeed for RHDH service.

api.endpoint_typeSpecify the service configuration type:

streamingorquery.Execute the evaluation by using the

lightspeed-evalcommand:lightspeed-eval \ --system-config config/system.yaml \ --eval-data config/evaluation_data.yaml \ --output-dir ./my_evaluation_results

Verification

- Navigate to the specified output directory and verify that the generated reports contain the model performance scores.

8.4. Analyze evaluation results to identify performance gaps

Determine the performance of Developer Lightspeed for RHDH and identify documentation areas that require model improvement by analyzing evaluation results in the repository. You can use these reports to compare performance across different large language models (LLMs) and topics.

Prerequisites

-

You must have access to the

developer-lightspeed-evaluationrepository.

Procedure

-

In the root of the repository, navigate to the version-specific folder within the

/evaluation-resultdirectory. Open the following files to evaluate performance:

- Model Pass Rate: Compare the overall performance between different LLMs.

- Topic Pass Rate: Identify performance trends and gaps within specific documentation areas.

Verification

- Verify that the reports display data visualizations or metrics consistent with your recent evaluation run.

8.5. Evaluation metrics and historical data reference

Use the available metrics to evaluate the performance of Developer Lightspeed for RHDH at the conversation turn level.

These metrics provide a standardized way to measure the accuracy and reliability of the generated responses and the retrieved content.

| Metric | Description |

|---|---|

|

|

Measures how well the answer is derived solely from the retrieved context. |

|

|

Measures whether the retrieved context contains all information required to answer the question. |

|

|

Verifies if the retrieved documentation chunks are relevant to the user query. |

|

|

Measures the ratio of useful information within the retrieved documentation chunks. |

|

|

Compares the generated response against the expected ground-truth response. This custom metric is implemented in the evaluation tool. |

8.6. Release report and historical data

Use the latest Q&A dataset and evaluation results to monitor the current performance of Developer Lightspeed for RHDH.

Access version-specific branches that contain the datasets and evaluation results required to track improvements or regressions across product releases.

The main branch contains work-in-progress data for versions currently under development. For stable evaluations or historical tracking, you must switch to the branch associated with a specific release.

| Release version | Branch name | Data included |

|---|---|---|

|

Latest stable |

Most recent version branch |

The current question and answer (Q&A) dataset and evaluation results. |

|

Historical |

Previous version branches |

Datasets and evaluation results for previous releases to track regressions. |

9. Appendix: LLM requirements

This appendix provides information about large language model (LLM) providers compatible with Developer Lightspeed for RHDH, including OpenAI, Red Hat OpenShift AI, Ollama, and vLLM.

9.1. Large language model (LLM) requirements

Developer Lightspeed for RHDH follows a Bring Your Own Model approach, requiring you to provide access to a large language model (LLM). You must configure your preferred LLM provider during installation.

LLMs are usually provided by a service or server. Because Developer Lightspeed for RHDH does not provide an LLM for you, you must configure your preferred LLM provider during installation. You can configure the underlying Llama Stack server to integrate with several LLM providers that offer compatibility with the OpenAI API including the following inference providers:

- OpenAI (cloud-based inference service)

- Red Hat OpenShift AI (enterprise model builder and inference server)

- Red Hat Enterprise Linux AI (enterprise inference server)

- Ollama (popular desktop inference server)

- vLLM (popular enterprise inference server)

- Gemini (available through Vertex AI)

9.2. OpenAI

OpenAI offers a range of generative AI models, such as GPT 5, which can be used to provide inference services for applications like Developer Lightspeed for RHDH.

To use OpenAI with Developer Lightspeed for RHDH, you need access to the OpenAI API platform.

Additional resources

9.3. Ollama

Ollama is an open-source tool that simplifies running large language models (LLMs) locally. It provides a command-line interface for downloading, managing, and running open-source models such as Llama 3 and Mistral.

The open source Ollama server in container form provides a convenient local testbed for LLM models that is very accessible and easily controlled.

Additional resources

9.4. vLLM

vLLM is an open-source, high-throughput serving engine for large language models (LLMs). It optimizes memory usage and increases the number of concurrent requests an LLM can handle.

Additional resources

10. Appendix About user data security

This appendix explains how Developer Lightspeed for RHDH handles user data, feedback collection, and the Bring Your Own Model approach for LLM providers.

10.1. About data use

Developer Lightspeed for RHDH sends your chat messages to the large language model (LLM) provider configured for your environment. These messages could potentially contain information about your users, cluster, or business environment.

Developer Lightspeed for RHDH has limited capabilities to filter or redact the information you provide to the LLM. Do not enter information into Developer Lightspeed for RHDH that you do not want to send to the LLM provider. To remind end users not to share private or confidential information, Developer Lightspeed for RHDH begins each new chat with an 'Important' message asking them not to “include personal or sensitive information” in their chat messages.

10.2. About feedback collection

Developer Lightspeed for RHDH stores user feedback submissions, including scores and text, locally in the Pod file system. Red Hat does not have access to the collected feedback data.

10.3. About Bring Your Own Model

Developer Lightspeed for RHDH uses a Bring Your Own Model approach, letting you connect Lightspeed Core Service to any OpenAI API-compatible inference service. The only technical requirements for inference services are:

- The service must conform to the OpenAI API specification.

- The service must be configured correctly following the installation and configuration instructions. There are many commercial and open source inference services that support the OpenAI API specification for chat completions. The cost, performance, and security of these services can differ and it is up to you to choose, through evaluation and testing, the inference service that best meets your company’s needs.

Additional resources

10.4. Your responsibility

All of the information your users share in their questions and responses with Developer Lightspeed for RHDH are shared with the LLM inference service you configured. You are responsible for ensuring compliance with your company’s policies regarding the sharing of data with your chosen inference service.